Google Algorithm Update 2023-2024: Every search engine tool has something many refer to as an algorithm. Every web index uses this formula to assess website pages and decide their significance and worth when slithering them for conceivable incorporation in their web search engine. A crawler is a robot that browses all these pages for the search engine.

If you’re looking for some simple things that you can do to increase the position of your site rank in the search engines or directories. We discuss Google Algorithm Update 2023-2024 in this post.

What is a core update?

A core update is a significant change that Google makes to its search algorithms and systems. These changes affect how Google ranks and shows websites on its search pages. Google does core updates several times a year to improve the search experience for users.

Google Key Algorithm

Google has a thorough and exceptionally developed technology, a direct interface, and a wide array of search instruments that empower clients to access data on the web handily.

Google clients can browse the web and discover data in different languages, maps, and stock statements, read the news, scan for a long-lost friend using the phonebook postings accessible on Google for all US and Indian cities, and fundamentally surf the 3 billion odd website pages on the web!

Google brags about having the world’s most significant document of Usenet messages, dating back to 1981. Google’s innovation can be accessed from any ordinary work area PC just as from different remote stages, for example, WAP and I-mode phones, handheld gadgets, and other such Internet-prepared devices.

What’s new with Google in 2024?

Many people are curious about what Google is doing with its search algorithms and systems. Google uses These rules and methods to rank and show websites on its search pages. Google changes these rules and methods often, sometimes in significant ways. These big changes are called core updates, and Google tells us about them on its Search ranking updates page.

Local ranking update

Some sources say that Google changed its local ranking algorithm on January 4 and 9, 2024. This is the rule that Google uses to rank and show websites related to a specific location. This change may have affected some websites’ local search traffic and rankings. But Google has not confirmed this change, and we don’t know how big or small it is.

Circle to Search feature

Another new thing that Google may launch in 2024 is the Circle to Search feature. Google showed us this feature on January 17, 2024. This feature lets users search for anything on their Android phone screen without leaving the app they are using. They can do this by circling, highlighting, scribbling, or tapping. This feature will be available on some premium Android phones worldwide on January 31, 2024.

Multisearch experience

Google may also keep improving its multisearch experience, which started in 2022. This feature lets users point their camera (or upload a photo or screenshot) and ask questions using the Google app. Then, they get results with intelligent insights that are more than just visual matches.

How to make your content better for Google?

As Google’s algorithms get more intelligent and focus on users, website owners and content creators must ensure their content is helpful, trustworthy, and high-quality. It should also match the needs and wants of their target audience.

Google has a help page for creating content for people, not for machines. It also has some questions you can ask yourself to check and improve your content. Google says there is nothing wrong with pages that may not do as well as before a core update and that they don’t need to fix anything specific. But they can still review their content and make sure it is relevant, accurate, and up-to-date.

How do I know if my website is affected by a core update?

Google’s Significant Changes in 2024

Google has changed its search rules and methods a lot. These are called core updates, and they can make your website rank higher or lower on Google’s search pages. You can do these things to see if your website is affected by a core update:

- See if Google has told us about a core update on its [Search ranking updates] page or [Search Central] blog. Core updates happen every few months and have names like the May 2022 Core Update.

- Wait for the core update to finish, which can take weeks. Your website’s ranking and traffic may increase during this time.

- Use tools like [Google Analytics 4] or [Google Search Console] to check your website’s data. Look for changes in your traffic, ranking, search volume, and other things before and after the core update.

- See how your website compares to your competitors and others in your field. You can use tools like [SEMRush] or [Ahrefs] to see your rankings and how you match up to them.

- Check your website’s content quality and how well it fits your audience. Google’s core updates want to give the best results to users, so you should make your content helpful, reliable, and high-quality. You can use Google’s [help page] to create content for people, not for machines, and [self-assessment questions] to check and improve your content.

Don’t worry if your website does worse after a core update. Google says there is nothing wrong with pages that may not do as well as before and that they don’t need to fix anything specific. But you can still improve your content and website and wait for the following core update to see good changes.

Google the last update name with the date

The last update that Google announced for its search algorithm and system was the December 2023 Core Update. It was released on December 15, 2023, and took about two weeks to roll out fully. This update was designed to improve how Google assesses and ranks content on its search pages.

Some other updates that Google may have introduced in 2024 are:

- A possible local ranking update on January 4 and 9, 2024, affected some websites’ local search traffic and rankings. Google has not yet confirmed this update.

- The Circle to Search feature lets users search for anything on their Android phone screen without switching apps. This feature was unveiled on January 17, 2024, and is expected to launch globally on select premium Android phones on January 31, 2024.

- The multisearch experience uses AI to provide intelligent insights based on users’ camera or photo queries. This feature was launched in 2022 and is likely to continue improving in 2024.

Importance of Google Algorithm Update

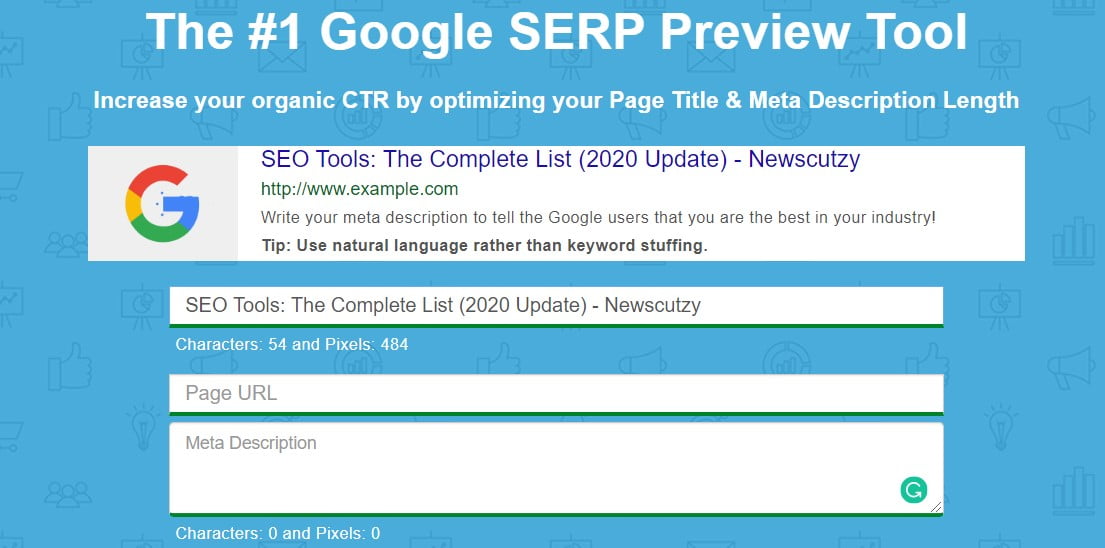

Optimal title length

Google typically shows the initial 50–60 characters of a title tag. On the off chance that you hold your titles under 60 characters, our examination proposes that you can expect about 90% of your titles to show appropriately. There’s no precise character limit since characters can fluctuate in width, and Google’s presentation titles maximize (right now) at 600 pixels. So, keep your title characters under 600 pixels.

See below Picture

CHECK YOUR TITLE AND MEAT DESCRIPTION FOR BETTER SEO HERE: Google SERP Preview Tool

Editor Recommendation

How To Do SEO For Beginners Guide Step-By-Step

Google Algorithm Update Calculate Page Rank By Popularity

The web search innovation offered by Google is frequently the innovation of the decision of the world’s leading portals and sites. It has additionally profited the advertisers with its extraordinary promotion program that doesn’t hamper the web surfing experience of its clients yet, at the same time, carries incomes to the publicists.

At the point when you look for a specific phrase or keyword, a large portion of the web crawlers returns a rundown of pages arranged by the occasions the watchword or keyword shows up on the site.

Google’s web search innovation includes using its indigenously structured Page Rank Technology and hypertext-coordinating examination, which makes a few momentary counts attempted with no human intercession. Google’s auxiliary structure additionally extends at the same time as the internet expands.

Page Rank innovation includes utilizing a condition that contains many variables and terms and decides a verifiable estimation of the centrality of website pages. It is determined by explaining an equation of 600 million factors and more than 4 billion terms.

In contrast to other web engines, Google doesn’t figure interfaces yet uses the broad connection structure of the web as a hierarchical instrument. At the point when the connection to a Page, suppose Page C is clicked from a Page B, at that point, that snap is credited as a vote towards Page C in the interest of Page B.

Analysis by Matching-Hypertext

In contrast to its traditional partners, Google is a hypertext-based web crawler. This implies it examines all the substances on each website page and factors in text styles, subdivisions, and the specific places of all terms on the page.

Not only that, but Google additionally assesses the substance of its closest website pages. This strategy of not dismissing any topic pays off and empowers Google to return results nearest to user inquiries.

Google has a highly straightforward 3-advance methodology in dealing with a question submitted in its pursuit box:

- At the point when the question is submitted and the enter key is squeezed, the web server sends the inquiry to the record servers. The record server is actually what its name recommends. It comprises a record, much like the book list, which shows where the specific page containing the questioned term is situated in the book.

- After this, the question proceeds to the doc servers, which recover the stored archives. Page portrayals or “snippets” are then created to depict each result reasonably.

- These outcomes then come back to the user in under one second! (Typically.)

Around once every month, Google refreshes its index by recalculating the Page Ranks of every one of the website pages that they have crawled. The period during the update is known as “Google Dance”.

Submitting Your URL to Google

Google is a completely programmed internet searcher with no human mediation associated with the hunt procedure. It uses robots known as ‘spiders’ to constantly crawl the web for new updates and new sites to be remembered for the Google Index. This robot programming follows hyperlinks from site to site.

Google doesn’t necessitate you to present your URL to its database for consideration in the list, as it is done at any rate by the ‘spiders.’ Nonetheless, manual accommodation of URL should be possible by setting off to the Google site and tapping the related connection.

One significant thing here is that Google doesn’t acknowledge installment of any kind for webpage accommodation or improving your site’s page rank. Additionally, presenting your webpage through Google doesn’t ensure it is listed in the record.

Cloaking

Once in a while, a webmaster may program the server to return diverse substances to Google, and then it comes back to regular users, frequently done to distort web index rankings. This procedure is alluded to as shrouding as it covers the actual site and returns misshaped site pages to web search engines crawling it.

This can delude clients about what they’ll discover when they click on a query item. Google exceptionally objects to any such practice and may put a prohibition on the site, which is seen as being blameworthy of shrouding.

Google Guidelines

Here are some significant tips and strategies that can be utilized while managing Google.

FOLLOW

- A site should have an obvious progression and links and ideally be anything easy to navigate.

- A site map is required to enable the users to circumvent your site and on the off chance that the site map has over 100 connections, at that point, it is prudent to break it into a few pages to avoid a mess.

- Come up with primary and exact keywords and ensure that your site highlights feature relevant and informative substance.

- The Google crawler won’t perceive content covered in the pictures, so while portraying significant names, watchwords, or connections, stay with plain content.

- The TITLE and ALT labels should be unmistakable and exact, and the site should have no wrecked connections or erroneous HTML.

- Dynamic pages (the URL comprising of a ‘?’ character) should be kept to a base as few of every odd web index creepy crawly can slither them.

- The robots.txt record on your web server ought to be present and ought not to hinder the Googlebot crawler. This record tells crawlers which registries can or can’t be crawled.

Don’t

- When making a website, don’t cheat your users, such as those who will surf your site. Try not to give them unimportant substance or present them with deceitful plans.

- Avoid tricks or link plans intended to build your site’s positioning.

- Do not utilize hidden messages or shrouded links.

- Google dislikes sites utilizing the shrouding system. Consequently, it is prudent to keep away from that.

- Automated questions ought not to be sent to Google.

- Avoid stuffing pages with unessential words and substance. Don’t make different pages, sub-spaces, or areas with fundamentally copied content.

- Avoid “doorway” pages made only for web crawlers or other “cookie-cutter” approaches, for example, offshoot programs with scarcely any unique substance.

Spider/Crawler Considerations after Google Algorithm Update

Additionally, consider technical elements. If a site has a slow association, it may be a time-out for the crawler. Exceptionally Very complex pages, as well, may break before the crawler can collect the content.

On the off chance that you have a chain of importance of catalogs at your site, but the most significant data is high, not profound. Some web search tools will presume that the higher you put the data, the more significant it is. Also, crawlers may not wander further than three, four, or five registry levels.

Most importantly, recollect the self-evident – full-content web indexes such as file content. You likely could be enticed to utilize extravagant and costly plan procedures that either square web search tool crawlers or leave your pages with next to no plain content that can be recorded. Do not fall prey to that allurement.

How do I check my website’s ranking on Google?

There are different ways to check your website’s ranking on Google. Here are some of them:

- You can manually search for your target keyword on Google and see where your website appears. However, this may not be accurate because Google personalizes the search results based on your location, language, and device.

- You can use a free keyword rank checker tool like this one. Just enter your keyword, website, and location, and it will show you your ranking position, SEO metrics, and the top 10 results. You can do this for all your keywords.

- Sign up for a free tool like Ahref or Google Web Admin. These tools can show you all the keywords your website ranks for, the ranking URL, the ranking position, the search volume, the traffic, and more. However, they have some limitations, such as the number of keywords and the accuracy of rankings.

- You can use a paid rank tracking tool like Ahref Rank Tracker. This tool can track your rankings for many keywords over time and show you the ranking changes, the SERP features, the traffic, and more. You can also track your competitors and see how you compare to them. You can also track your rankings in specific locations, which is helpful for local keywords.

if this information helps you to know about Google Algorithm Update. Please give us feedback.