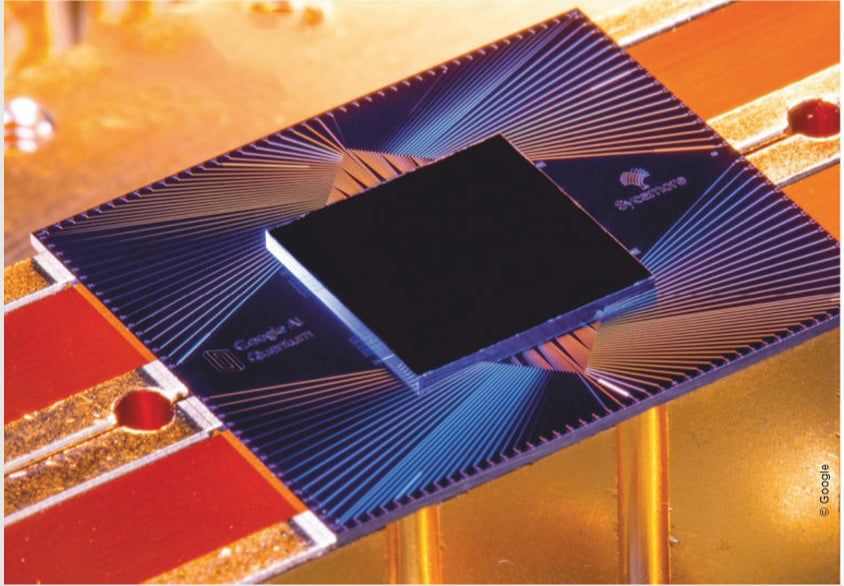

“Google,” says it has achieved “quantum superiority”. This is an extravagant method for saying its Sycamore processor can accomplish something unique. It worked out an unpredictable maths issue in a short time and 20 seconds. please read this information about how Google and IBM quantum computing are explained simply.

The web crawler giant says a state-of-the-art supercomputer would battle to do it in less than 11,000 years. This is on the grounds that Sycamore isn’t only a move up to existing innovation… it’s a totally extraordinary method for working.

Another generation of number-crunchers is bridging the intensity of particles: Please Read this Quantum Computing Explained carefully.

Sycamore is a “Quantum Computer”, which means it’s supercharged by the abnormal conduct of particles.

This propelled handling force could help fix dementia or concoct artificial intelligence, so it’s nothing unexpected that other tech firms are working diligently to build up their own variants.

Indeed, even governments are investing billions of money into their own research. Truth be told, the contention between China and the United States has been known as the “Space Race of 21st Century”.

FEATURE (Quantum Computers)

To understand quantum computing explained simply works, you need to wrap your head around a mind-blowing fact: objects can be in two places at once.

This is very hard to grasp, in part because it’s not how we perceive things, and also because for centuries Isaac Newton and other scientists have said the world follows predictable patterns.

For instance, an apple always falls down to the ground, even if it bonks you on the head first. And if you take that apple home and put it in your kitchen, you’re not suddenly going to find it in your bathroom.

But these rules don’t apply at the subatomic level. This is what ‘quantum’ means: the smallest amount – or quantity – we can measure, the building blocks of the universe.

In the early 20th century, scientists like Niels Bohr, Werner Heisenberg, and Erwin Schrödinger found that though particles can be found almost anywhere, the certainty of finding one in any particular place is zero.

This is because particles can be in two places at once. For instance, electrons spin both up and down simultaneously.

Physicists call this behavior‘ superposition’. To complicate matters, superposition only happens when we’re not looking. The moment we try and measure it,

the particles lose their uncertain state and only spin up or down. The best physicists can do is work out the chance of which state they will appear in when observed.

As if this wasn’t weird enough, particles can also be ‘entangled’ in pairs or groups. They become deeply linked to one another, so you can’t change one without the other changing as well.

Albert Einstein called this “spooky action at a distance” because it works even if the particles are at opposite ends of the universe.

Incredibly, Feynman told a lecture hall at the California Institute of Technology that it was time to reinvent the computer in 1981.

That’s the same year IBM coined the phrase ‘personal computer’, or ‘PC’ for short. And it would still be another decade before these devices became everyday items.

But all computers – from those early IBMs to your modern-day MacBook – work by processing ‘bits’ of information. Each bit represents the value of one or zero.

This binary code forms the basis of all the calculations a computer can process. And the more bits a computer has, the harder the task it can handle.

A quantum computer, Feynman proposed, would use a quantum bit, or ‘qubit’. These would exist in superposition, so they can hold both one and zero at the same time.

If you were to quantum entangle two qubits, they could hold four values at once: 1-0, 0-1, 1-1, and 0-0. As the number of qubits grows, a quantum computer very quickly becomes more powerful than a conventional one, so it can process information in a fraction of the time.

While Feynman provided a blueprint for how the technology could work, actually building a quantum computer proved much harder. Qubits are made from individual atoms or subatomic particles.

Just trying to control their risks making them lose their quantum properties. Just linking them together took years of work, with the first two-qubit computer appearing in 1998.

This all changed over 20 years ago when superconducting circuits were pioneered in Japan. This involves cooling qubits to -273 degrees Celsius using powerful fridges.

Using this method, Intel has achieved 49 qubits, and IBM boasts 53. Google’s game-changing Sycamore processor also has 53 qubits, but the tech giant’s already built another with 72.

A start-up with US$119.5 million in funding called Rigetti even says it’s working on a 128-qubit system.

But it’s harder to cool large objects than it is smaller ones, especially when you need them to be colder than the depths of space. So just as superconducting qubits have reached the size that they can achieve quantum supremacy, they may be about to outgrow the refrigerators they rely on.

One alternative is to use ions, any atom with an added electrical charge. These can be trapped using microchips that emit electric fields. Each microchip can then be used as a qubit. Crucially, these can work at room temperature, so solve the fridge problem.

But trapped ions have only been tested in labs, and will take a long time to build on the industrial scale that’s needed. Microsoft, meanwhile, is experimenting with topological qubits, which would be less sensitive to temperature, but this involves splitting electrons.

You could say this technology is in a quantum state of its own: it’s both making important breakthroughs and at the same time we’re only just beginning to understand it.

Bits vs qubits

Traditional computers rely on silicon transistors that work like switches to encode bits of data, representing either one or zero. But no matter how many billions of transistors we pack into them,

they can only be in one state – a particular combination of ones and zeros – across those billions of transistors. But a qubit, made from a particle, can be both one and zero at the same time thanks to superposition.

So while ten bits give you 1,024 combinations of ones and zeroes – which can represent one number between zero and 1,023 – a qubit can encode all 1,024 numbers simultaneously.

Through quantum entangling, 20 qubits can encode over a million numbers at once. A hundred qubits would, in theory, be more powerful than all the supercomputers in the world combined.

Performing operations

A conventional computer operates in single bits, finding outcomes that are either one or zero. This means it can only perform calculations one at a time. A quantum computer, however, uses all its qubits – which are linked by quantum entanglement – simultaneously. This means it can work on a million calculations at once.

This makes quantum computers exponentially faster and more powerful. However, the slightest interference – from a change in temperature, electromagnetism, a sound wave, or physical vibration – can make qubits stop existing in multiple states at once. This can introduce errors in calculations or even slow quantum computers down to the speeds of regular ones.

Don’t bin your laptop

The power and speed of quantum computing will make even the next generation of supercomputers obsolete. So-called ‘exascale’ computers will be able to perform a billion billion calculations per second.

That’s an impressive 10 to the power of 18 operations, but quantum computers will carry out 10 to the power of 1,000. Supercomputers are extremely specialist tools.

When quantum computers replace them they won’t be carrying out even ten percent of the world’s computing tasks.

The average user needs a lot less computing power day-to-day and enjoys the portability that quantum computing may never be able to provide.

If this changes, users could still connect to a quantum computer using a conventional computer via the cloud.

Editor Recommendation:

How Computers Work – [ Evolution of Technology]

What Can QUANTUM COMPUTING Really Do? 👍

Question and Answer

Prof Winfried Hensinger of the University of Sussex puts Google’s achievement into the perspective of Quantum Computing Explained.

How significant is Google’s breakthrough?

It’s a technical next step, but it’s not necessarily as significant as some people think. What Google has done is solved an academic problem – one that is entirely useless in a practical sense. It’s a problem that’s chosen with one thing in mind: whether a quantum computer can do something a conventional computer can’t.

When do you think quantum computing will come into its own?

Quantum computing will likely follow the same development path as conventional computers. In the 1940s, conventional computers decided World War II by breaking the German Enigma code. However, while they were good for a particular task, they couldn’t tell you train times, handle word processing, or play video games.

It was another 40to 50years until we used them for literally everything. It’s a gradual process. Quantum computers now are probably approaching those in the 1940s. In the next five years, these machines will be able to solve one particular practical problem.

Please let us know your thoughts and how we give quantum computing explained simple.

![Best IBM Quantum Computing Explained ᐈ – [21st Century Future] Quantum Computing Explained](https://newscutzy.com/wp-content/uploads/2020/02/Quantum-Computing.jpg)