How Does Computer Memory Work: IT DOESN’T MAKE ANY DIFFERENCE if your electronic gadget of decision is a workstation, PC, camera, cell phone, tablet, or glasses. Regardless of whether their capacities uncontrollably contrast, all gadgets work utilizing a similar essential establishment of figuring, the transistor.

The processors in the first personal computers could work with 16 bits at a time, which limits them to numbers no bigger than the decimal number 65,535. Why, that’s not big enough to count all the people in Murfreesboro, Tennessee! Luckily processor upgrades gave PCs the ability to work with 32 bits at a time, taking them up to the decimal notation of 4,294,967,295.

Not bad, but the processors in common use in 2014 and (spoiler alert!) for a long time into the future can juggle 64 bits with the ease of The Flying Karamazov Brothers throwing swords back and forth—and a lot more easily than the boys juggling 281,474,976,710,655 swords, the decimal equivalent of 64 bits.

Chapter 2:Technology Illustrated Guide – How Does Computer Memory Work?

A processor being able to tackle more bits simultaneously doesn’t result only in bigger numbers. Higher bit counts mean more data and instructions can be moved from memory into the processor in a single trip. That means faster computing.

It’s a mistake to think of transistors in terms of number alone, however. The same on/off bits can stand for true and false, enabling devices to work with Boolean logic. (“Select this AND this but NOT this.”) Combinations of transistors in different arrangements are called logic entryways, which are consolidated into arrays called half adders, which thus are combined into full adders, allowing the processor to calculate with numbers instead of merely counting.

Transistors make it possible for a small amount of electrical current to control a second, much stronger current—just as the small amount of energy needed to throw a wall switch controls the more powerful energy surging through the wires to give life to a spotlight.

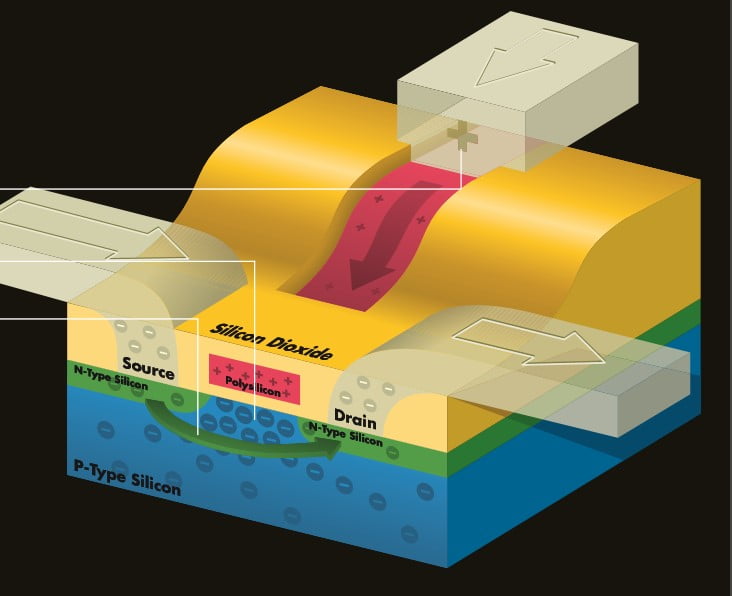

How a Little Transistor Does Big Jobs

THE MOST SUPREME INVENTION of the “20th Century” and the thing that will most influence technical and social progress in the 21st century is simply a switch. Well, not simply a switch. It’s a transistor switch, a cousin of the wall switch that closes to let electricity flow through it or opens to block the current.

The distinction between the kin is that the transistor can be made exceedingly little. Its size gives it the ability to turn off and on so rapidly that the number of times it closes or opens is measured in millionths of a second. And its size lets us join transistors into mighty armies that we call microprocessors, capable of conquering the prickly, unexplored wildernesses of science, music, and thought. this is one of the main parts of How Does Computer Memory Work.

1. A little, positive electrical charge after is sent one aluminum lead that keeps running into the transistor. The positive charge spreads to a layer of electrically conductive polysilicon covered amidst nonconductive silicon dioxide. Silicon dioxide is the main element of sand and the material that gave Silicon Valley its name.

2. The positive charge attracts negatively charged electrons from the base made of P-type (positive) silicon that separates two layers of N-type (negative) silicon.

3. The surge of electrons out of the P-type silicon makes an electronic vacuum that is filled by electrons hurrying from another conductive lead called the source. In addition to filling the vacuum in the P-type silicon, the electrons from the source also flow to a similar conductive lead called the drain. The rush of electrons completes the circuit, turning on the transistor so that it represents 1 bit. In the event that a negative charge is connected to the polysilicon, electrons from the source are repulsed and the transistor is turned off.

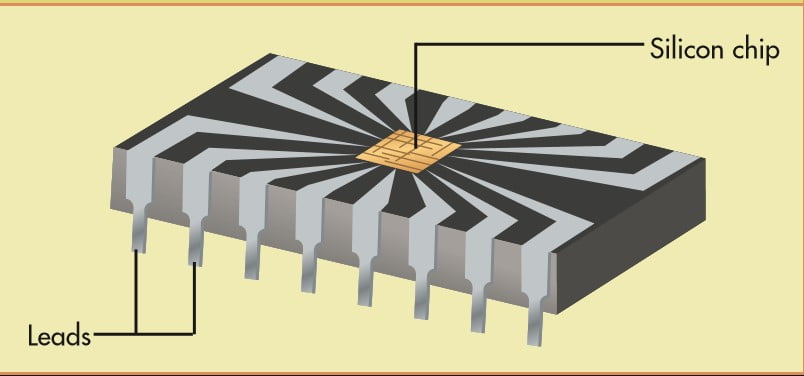

The Birth of a Microchip

The first silicon transistor, shown in the photo, was invented at Bell Labs in 1947. The device, made of two gold electrical contacts and a germanium crystal, was built on a human scale, which makes its workings easily visible.

But to be really useful, a transistor needs other transistors—so many that creating them on Bell Labs’ human scale would guarantee only gargantuan gadgets.

Scientists quickly developed ways that reduced the size and increased the efficiency of transistors. Today, a microchip is conceived on a computer that converts the engineers’ logical concepts into physical designs used to fabricate the soul of a chip.

The body of the chip is born of a thin, highly polished slice of silicon crystal grown in the lab. In spite of the fact that silicon is the second-most copious component on earth, it doesn’t appear normally in its unadulterated frame.

How Does Computer Memory Work with Building a Microprocessor

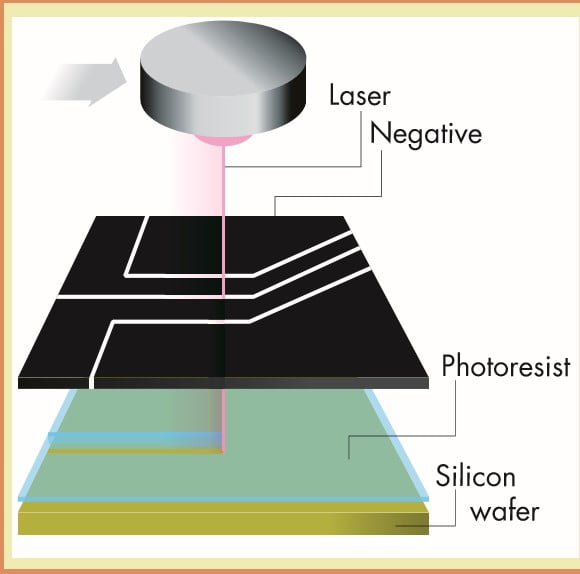

In a photoetching process, a chemical called photoresist covers the silicon wafer’s surface. Intense thin beams of light are shown through a negative produced from the engineer’s CAD designs. Or, the computer might guide a beam of electrons to trace the chip design.

The photoresist that has been touched by beams of light or electrons is washed away, and where the silicon is no longer protected by the photoresist, an acid bath etches channels into the silicon’s surface. These channels are filled with conductive materials to create the circuits along which the computer’s digital signals travel.

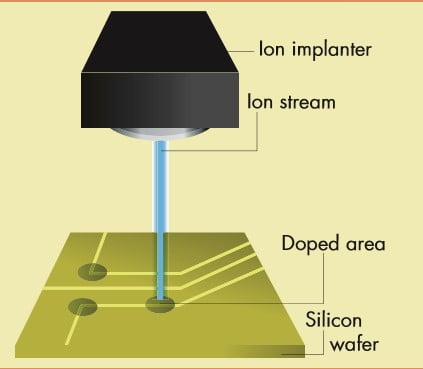

Ion implanters next dope the silicon with impurities by shooting molecules into the silicon. By itself, silicon doesn’t mixed electricity, but when doped, it becomes a semiconductor. The procedure is repeated for as many as 8 to 12 other layers of a microchip, joining the layers on the fly.

The use of layers makes it possible for more than one circuit to cross each other in the microchip’s overall design without actually clashing on the same layer. The process can take weeks to complete but still manages to produce microprocessors in the quantities hungered for by new machines and their creators.

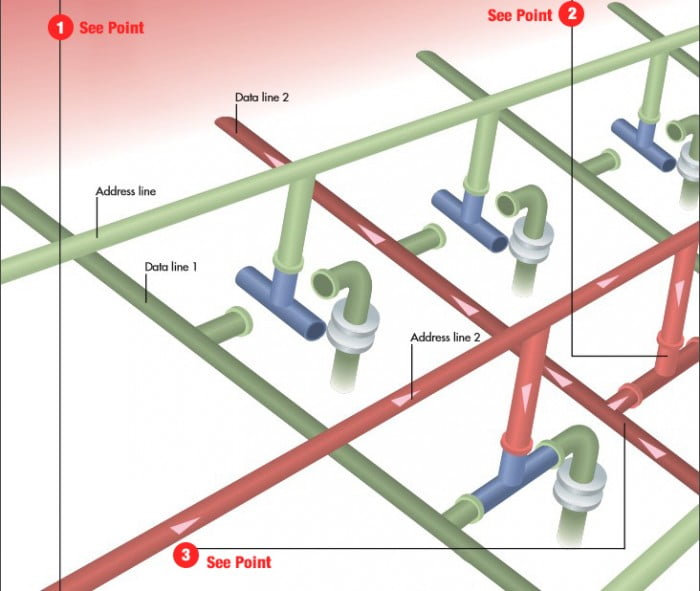

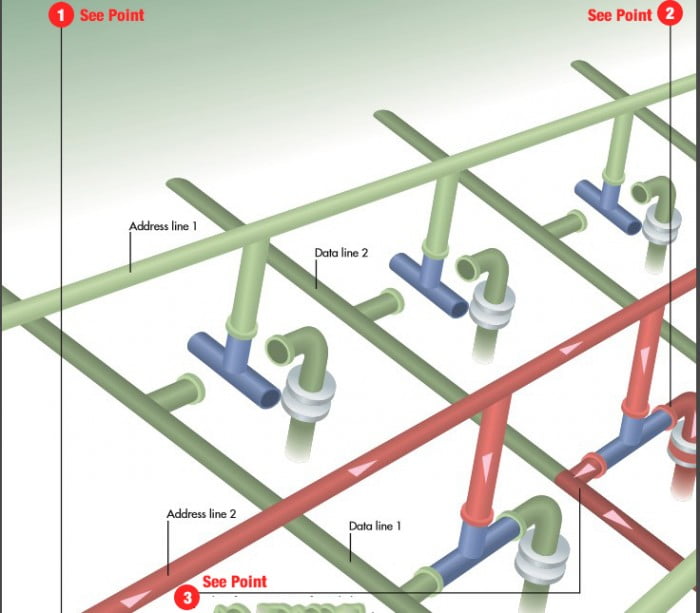

How Does Computer Memory Work for Writing Data to RAM

1. Software, in a blend with the operating framework, sends a burst of power along a location line, which is a tiny strand of electrically conductive material scratched onto a RAM chip. Each location line recognizes the area of a spot in the chip where data can be put away. The burst of electricity identifies where to record data among the many address lines in a RAM chip.

2. The electrical pulse turns on (closes) a transistor that’s connected to a data line at each memory location in a RAM chip where data can be stored. A transistor is basically a microscopic electronic switch.

3. While the transistors are turned on, the “Software” sends blasts of power along chosen information lines. Each burst speaks to a 1-bit. The 1-bit and the 0-bit make up the native language of processors—the most essential unit of data that a PC manipulates.

4 At the point when the electrical pulse achieves a location line where a transistor has been turned on, the beat courses through the closed transistor and charges a capacitor, an electronic gadget that stores power. This procedure rehashes itself consistently to revive the capacitor’s charge, which would somehow or another break out.

At the point when the PC’s capacity is killed, every one of the capacitors loses its charge. Each charged capacitor along the location line represents a 1-bit. An uncharged capacitor speaks to a 0-bit. The PC utilizes 1 and 0 bits as paired numbers to store and control all data, including words and illustrations.

The illustration here shows a bank of eight switches in a RAM chip; each switch is made up of a transistor and capacitor. The combination of closed and open transistors here represents the binary number 01000001, which in ASCII notation represents an uppercase A.

The first of eight capacitors along an address line contains no charge (0); the second capacitor is charged (1); the next five capacitors have no charge (00000), and the eighth capacitor is charged (1).

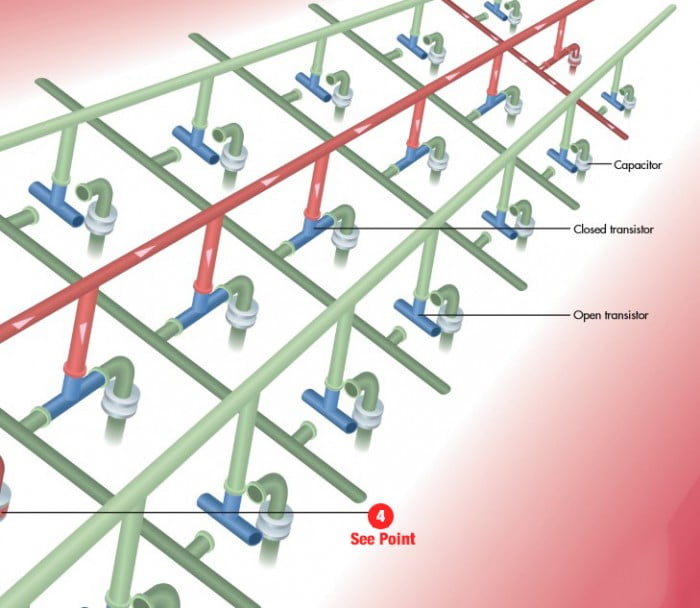

How Does Computer Memory Work for Reading Data from RAM

1. At the point when software needs to peruse information stored in RAM, another electrical beat is sent along the location line, indeed closing the transistors connected to it.

2. Wherever along the location line there is a capacitor holding a charge, the capacitor will release through the circuit made by the closed transistors, sending electrical pulses along the information lines.

3. The software recognizes from which information lines the beats come and deciphers each pulse as a 1. Any line on which a pulse is lacking shows a 0. The combination of 1s and 0s from eight information lines shapes a solitary byte of information.

How Chips Move More Data

The fastest processors are limited by how fast memory feeds them data. Traditionally, the way to pump out more data was to increase the clock speed. With each cycle or tick, of the clock regulating operations in the processor and movement of memory data, synchronous dynamic random access memory (SDRAM) memory— the kind illustrated here—could store a value or move a value out and onto the data bus headed to the processor. But the increasing speeds of processors outstripped that of random access memory (RAM). Memory narrowed the gap in two ways.

One is a double data rate (DDR). Previously, a bit was written or read on each cycle of the clock. It’s as if someone loaded cargo (writing data) onto a train traveling from Chicago to New York, unloaded that cargo (reading data), and then had to send the empty train back to Chicago again, despite having fresh cargo in New York that could hitch along for the return trip. With DDR, a handler could unload that same cargo when the train arrives in New York and then load it up with new cargo before the train makes its journey back to Chicago.

This way, the train is handling twice as much traffic (data) in the same amount of time. Substitute memory controller for the persons loading and unloading cargo and clock cycle for each round-trip of the train, and you have DDR.

Another change to RAM, DDR2, doubled the data rate in a different way. It cut the speed of memory’s internal clock to half the speed of the data bus. DDR2 quickly evolved into DDR3, and then to DDR4, each having the clock rate of its predecessor. A bonus effect to cutting memory speed is that the RAM uses less electricity–not a significant blow to the monthly electric bill, but it pays off with cooler running, more reliable memory chips.

Editors’ Recommendations:

How To Choose The Best MicroSD Card

The Evolution Of Technology Illustrated Guide

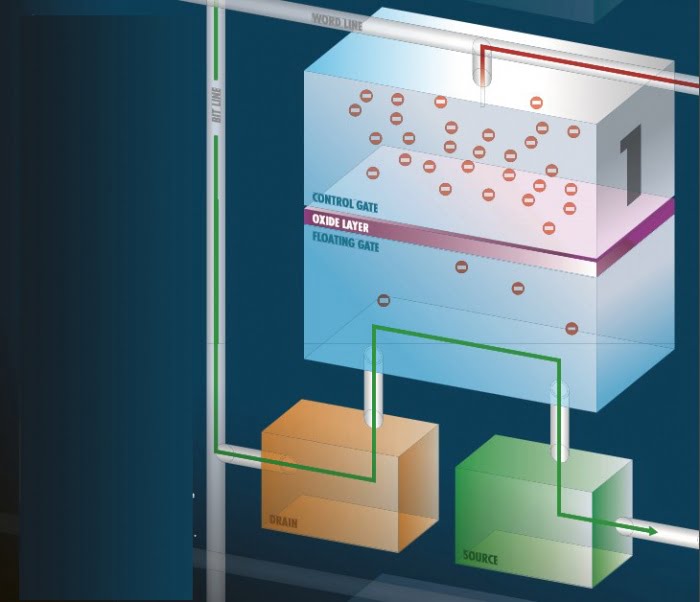

How Flash Memory Remembers When the Switch Is Off

BE IT A DESKTOP or laptop computer, data in RAM that’s not saved to disk disappears when the computer is turned off. But computers that have evolved into smartphones, tablets, cameras, and other handhelds don’t have disk drives for storage. They all use memory chips.

And yet when you turn off your smartphone, your contacts, music, pictures, and apps are still there when you turn it back on. That’s because mobile devices don’t use ordinary RAM. They use flash memory that freezes data in place.

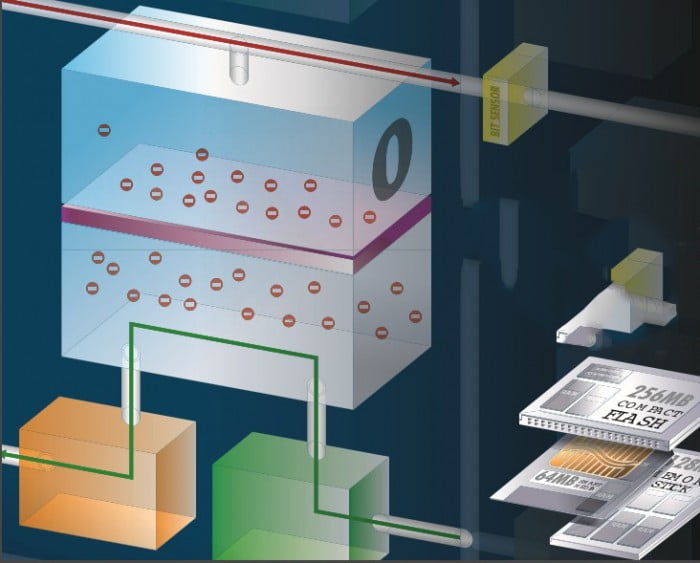

1. Flash memory is laid out along a grid of printed circuits running at the right edges to one another. In one direction, the circuit traces are word addresses; circuits at a right angle to them represent the bit addresses. The two addresses combine to create a unique numerical address called a cell.

2. The cell contains two transistors that together determine if an intersection represents a 0 or a 1. One transistor—the control gate—is linked to one of the passing circuits called the word line, which determines the word address.

3. A thin layer of metal oxide separates the control gate from a second transistor, called the floating gate. When an electrical charge runs from the source to the drain, the charge extends through the floating gate, on through the metal oxide, and through the control gate to the word line.

4. A bit sensor on the word line compares the strength of the charge in the control gate to the strength of the charge on the floating gate. If the control voltage is at least half of the floating gate charge, the gate is said to be open, and the cell represents a 1. Flash memory is sold with all cells open. Recording to it consists of changing the appropriate cells to zeros.

5. Flash memory uses Fowler-Nordheim tunneling to change the value of the cell to zero. In tunneling, a current from the bit line travels through the floating gate transistor, exiting through the source to the ground.

6. Energy from the current causes electrons to boil off the floating gate and through the metal oxide, where the electrons lose too much energy to make it back through the oxide. They are trapped in the control gate, even when the current is turned off.

7. The electrons have become a wall that repels any charge coming from the floating gate. The bit sensor detects the difference in charges on the two transistors, and because the charge on the control gate is below 50 percent of the floating gate charge, it is considered to stand for a zero.

8. When it comes time to reuse the flash memory, a current is sent through in-circuit wiring to apply a strong electrical field to the whole chip, or to foreordained sections of the chip called blocks. The field energizes the electrons so they are once again evenly dispersed.

Forms of Flas

Flash memory is sold in a variety of configurations, including SmartMedia, Compact Flash, and Memory Sticks, used mainly in cameras and MP3 players. There’s also USB-based flash drives,

which look like gum packages sticking out of the sides of computers, and are handy in a way once reserved for floppies for moving files starting with one computer then onto the next another. The form factors, storage capacities, and read/write speeds vary. Some include their own controllers for faster reads and writes.

https://newscutzy.com/technology/how-computers-work-the-evolution-of-technology-chapter-1/

Please give us feedback if this post (How Does Computer Memory Work) is helpful for you.